Stubsack: weekly thread for sneers not worth an entire post, week ending 22nd February 2026

-

OT: I tried switching away from my instance to quokk.au because it becomes harder and harder to justify being on a pro-ai instance, but it seems that your posts stopped federating recently.

Which makes that a bit harder to do. Any clue why?

Which makes that a bit harder to do. Any clue why?bluemonday1984@awful.systems update: quokka reached out to me and apparently you had been banned on another instance for report abuse and that ban had synchronised to quokk.au. You should be unbanned now which means the next stubsack should federate again.

E: I do not know how tags work E2: why does that format to a mailto link?

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

new episode of odium symposium. we look at rousseau’s program for using universal education to turn woman into drones

-

The phrase “ambient AI listening in our hospital” makes me hear the “Dies Irae” in my head.

The phrase “ambient AI listening in our hospital” makes me hear the “Dies Irae” in my head.

I’m personally hearing “Morceaux” myself.

-

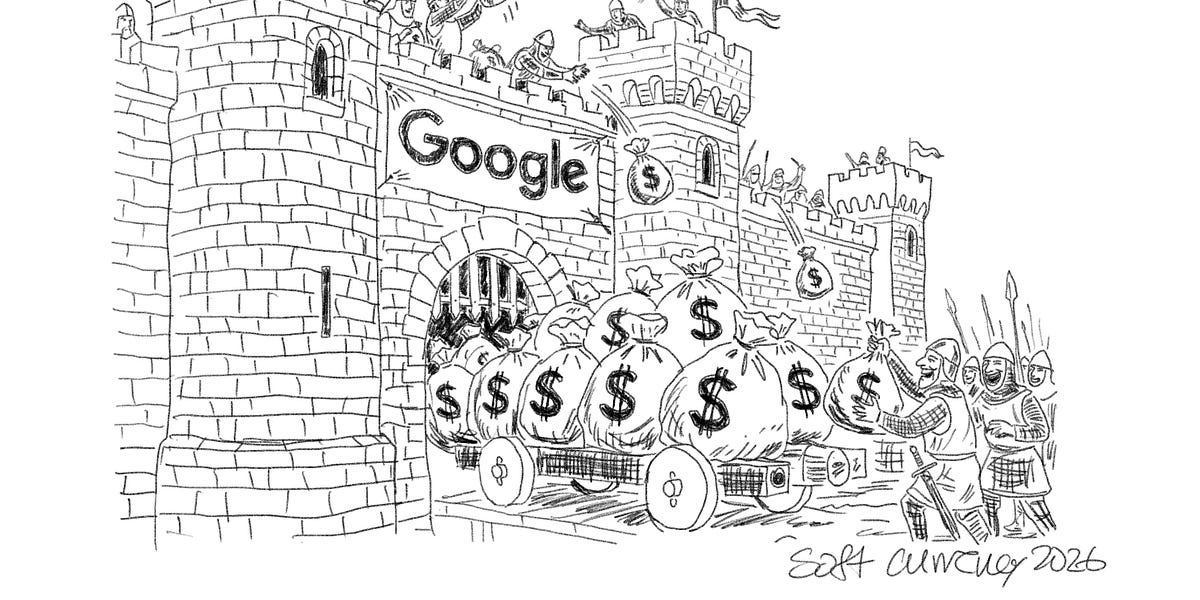

The Dangerous Economics of Walk-Away Wealth in the AI Talent War

Watch now | How firms are accidentally paying their best employees to become their biggest competitors.

(softcurrency.substack.com)

- Anthropic (Medium Risk) Until mid-February of 2026, Anthropic appeared to be happy, talent-retaining. When an AI Safety Leader publicly resigns with a dramatic letter stating “the world is in peril,” the facade of stability cracks. Anthropic is a delayed fuse, just earlier on the vesting curve than OpenAI. The equity is massive ($300B+ valuation) but largely illiquid. As soon as a liquidity event occurs, the safety researchers will have the capital to fund their own, even safer labs.

WTF is “even safer” ??? how bout we like just don’t create the torment nexus.

Wonder if the 50% attrition prediction comes to pass though…

So they’ve highlighted an interesting pattern to compensation packages, but I find their entire framing of it gross and disgusting, in a capitalist techbro kinda way.

Like the way the describe Part III’s case study:

The uncapped payouts were so large that it fractured the relationship between Capital (Activision) and Labor (Infinity Ward).

Acitivision was trying to cheat its labor after they made them massively successful profits! Describing it as a fracture relationship denies the agency on the Acitivision’s part to choose to be greedy capitalist pigs.

The talent that left formed the core of the team that built Titanfall and Apex Legends, franchises that have since generated billions in revenue, competing directly in the same first-person shooter market as Call of Duty.

Activision could have paid them what they owed them, and kept paying them incentive based payouts, and come out billions of dollars ahead instead of engaging in short-sighted greedy behavior.

I would actually find this article interesting and tolerable if they framed it as “here are the perverse incentives capitalism encourages businesses to create” instead of “here is how to leverage the perverse incentives in your favor by paying your employees just enough, but not enough to actually reward them a fair share” (not that they were honest enough to use those words).

WTF is “even safer” ??? how bout we like just don’t create the torment nexus.

I think the writer isn’t even really evaluating that aspect, just thinking in terms of workers becoming capital owners and how companies should try to prevent that to maximize their profits. The idea that Anthropic employees might care on any level about AI safety (even hypocritically and ineffectually) doesn’t enter into the reasoning.

-

can all of rationalism be reduced to logorrhea with load-bearing extreme handwaving (in this case, agentic self preservation arises through RL scaling)?

no there’s also racist twitter

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

need a word for the sort of tech ‘innovation’ that consists of inventing and monetizing new types of externalities which regulators aren’t willing to address. like how bird scooters aren’t a scam, but they profit off of littering sidewalk space so that ppl with disabilities can’t get around

EDIT: a similar, perhaps the same concept is innovation which functions by capturing or monopolizing resources that aren’t as yet understood to be resources. in the bird example, we don’t think of sidewalk space as a capturable resource, and yet

-

need a word for the sort of tech ‘innovation’ that consists of inventing and monetizing new types of externalities which regulators aren’t willing to address. like how bird scooters aren’t a scam, but they profit off of littering sidewalk space so that ppl with disabilities can’t get around

EDIT: a similar, perhaps the same concept is innovation which functions by capturing or monopolizing resources that aren’t as yet understood to be resources. in the bird example, we don’t think of sidewalk space as a capturable resource, and yet

parasitech?

-

parasitech?

I guess that doesn’t emphasise the “innovation” aspect much

-

It’s funny that such a thing is rare enough in the corpus to come out so recognizably in the output.

ime much like the SAFe diagrams, this diagram is all over a certain type of “this is how your corporation should be developing software” thotleader posts

(although I imagine the lag 2~3y all those heads have pivoted to promptpraise)

-

parasitech?

maybe “parasitic innovation”?

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

Baldur Bjarnason gives his thoughts on the software job market, predicting a collapse regardless of how AI shakes out:

If you model the impact of working LLM coding tools (big increase in productivity, little downside) where the bottlenecks are largely outside of coding, increases in coding automation mostly just reduce the need for labour. I.e. 10x increase means you need 10x fewer coders, collapsing the job market

If you model the impact of working LLM coding tools with no bottlenecks, then the increase in productivity massively increases the supply of undifferentiated software and the prices you can charge for any software drops through the floor, collapsing the job market

If the models increase output but are flawed, as in they produce too many defects or have major quality issues, Akerlof’s market for lemons kicks in, bad products drive out good, value of software in the market heads south, collapsing the job market

If the model impact is largely fictitious, meaning this is all a scam and the perceived benefit is just a clusterfuck of cognitive hazards, then the financial bubble pop will be devastating, tech as an industry will largely be destroyed, and trust in software will be zero, collapsing the job market

I can only think of a few major offsetting forces:

- If the EU invests in replacing US software, bolstering the EU job market.

- China might have substantial unfulfilled domestic demand for software, propping up their job market

- Companies might find that declining software quality harms their bottom-line, leading to a Y2K-style investment in fixing their software stacks

But those don’t seem likely to do more than partially offset the decline. Kind of hoping I’m missing something

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

Do we have any idea why some of the Zizians ended up in Vermont? The only thing in their network that comes to mind is the Monastic Academy for the Preservation of Life on Earth (MAPLE, a Buddhist-flavoured CFAR offshoot with the usual Medium post accusing leaders of sexual and psychological abuse)

Vermont and New Hampshire have clusters of generic Libertarians.

-

need a word for the sort of tech ‘innovation’ that consists of inventing and monetizing new types of externalities which regulators aren’t willing to address. like how bird scooters aren’t a scam, but they profit off of littering sidewalk space so that ppl with disabilities can’t get around

EDIT: a similar, perhaps the same concept is innovation which functions by capturing or monopolizing resources that aren’t as yet understood to be resources. in the bird example, we don’t think of sidewalk space as a capturable resource, and yet

In economic terms it’s less rent seeking and more rent creation. Like, taking advantage of public sidewalk space may not be a rent in the strictest sense given that the revenue model is still people paying for the service, but the ability to provide that service is absolutely predicated on taking over and monopolizing this public resource to the maximal degree possible.

By historical allegory, harkening back to the original destruction of the Commons, we’re looking at Enclosure 2: Frisco Drift.

Let’s also not lose sight of the fact that those sidewalks aren’t a natural formation, and that it’s the city government who ultimately takes on the burden of their construction and maintenance. This kind of neo-enclosure of public resources is then another kind of invisible subsidy.

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

-

In March 2025, the large language model (LLM) GPT-4.5, developed by OpenAI in San Francisco, California, was judged by humans in a Turing test to be human 73% of the time — more often than actual humans were. Moreover, readers even preferred literary texts generated by LLMs over those written by human experts.

do you know how hard it is to write something that aged poorly months before it was written? it’s in the public consciousness that LLMs write like absolute shit in ways that are very easy to pick out once you’ve been forced to read a bunch of LLM-extruded text. inb4 some asshole with AI psychosis pulls out “technically ChatGPT’s more human than you are, look at the statistics” regarding the 73% figure I guess. but you know when statistics don’t count!

A March 2025 survey by the Association for the Advancement of Artificial Intelligence in Washington DC found that 76% of leading researchers thought that scaling up current AI approaches would be ‘unlikely’ or ‘very unlikely’ to yield AGI

[…] What explains this disconnect? We suggest that the problem is part conceptual, because definitions of AGI are ambiguous and inconsistent; part emotional, because AGI raises fear of displacement and disruption; and part practical, as the term is entangled with commercial interests that can distort assessments.

no you see it’s the leading researchers that are wrong. why are you being so emotional over AGI. we surveyed Some Assholes and they were pretty sure GPT was a human and you were a bot so… so there!

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

Posting for archival and indexing purposes: u/GorillasAreForEating found an Urbit post titled “Quis cancellat ipsos cancellores?” which complains that Aella takes it on herself to exclude people and movements from the broader LessWrong/Effective Altruist community. The poster says that Aella was the anonymous person who pushed CFAR to finally do something about Brent Dill, because she was roommates with “Persephone.” He or she does not quite say that any of the accusations were untrue, just that “an anonymous, unverified report” says that some details were changed by an editor, and that her Medium post was of “dramatically lower fidelity, but higher memetic virulence” than Brent’s buddies investigating him behind closed doors (Dill posted about domming a 16-year-old who he met when she was 15 and he was ~27). The poster accuses Aella of using substances and BDSM games to blur the line of consent.

The post names Joscha Bach as someone Aella tried to exclude. We recently talked abut Bach’s attempt to get Jeffrey Epstein to fund an event where our friends would speak.

Often, people in messed-up situations point at a very similar situation and say “at least we are not like that.” I hope that all of these people find friends who can give them perspective that none of these communities are healthy or just. Whether you are in to bull sessions or polyamory, there are healthy communities to explore in any medium-sized city!

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

I looked it up, and this one is credited to Glen Wexler, who is an actual artist with a pretty distinct style and yes, he’s been incorporating AI into his process lately, and I guess he did use it here (those windows on those buildings are sus as hell, and the overall sharpness of the image just screams AI).

So it’s not outright slop, but still pretty disappointing and incongruous coming from this band. Their last two records were examining our society’s alienation through technology, at times to the point of “phone bad!” level nagging, but using the most literally destructive technology of them all is fine, as long as it helps keep the costs down, I guess?

And it just doesn’t look good, but come to think of it, most of their albums have bad cover art, it’s almost like they do it on purpose. Love the music, though.

It’s too bad if true, I can’t unsee it now. for reference: https://failureband.bandcamp.com/album/location-lost

-

maybe “parasitic innovation”?

Something like “innovations in parasitic enclosure” may perhaps be a phrase that can give a handle on it, yeah

-

Want to wade into the snowy surf of the abyss? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid.

Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned so many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this. Also, hope you had a wonderful Valentine’s Day!)

context: I wanted to know if the open source projects currently being spammed with PRs would be safe from people running slop models on their computer if they weren’t able to use claude or whatever. Answer: yes, these things are still terrible

but while I was searching I found this comment and the fact that people hated it is so funny to me. It’s literally the person who posted the thread. less thinking and words, more hype links please.

::: spoiler conversation https://www.reddit.com/r/LocalLLaMA/comments/1qvjonm/first_qwen3codernext_reap_is_out/o3jn5db/

32k context? is that usable for coding?

(OP’s response, sitting at a steady -7 points)

LLMs are useless anyway so, okay-ish, depends on your task obviously

If LLMs were actually capable of solving actual hard tasks, you’d want as much context as possible

A good way to think about is that tokens compress text roughly 1:4. If you have a 4MB codebase, it would need 1M tokens theoretically.

That’s one way to start, then we get into the more debatable stuff…

Obviously text repeats a lot and doesn’t always encode new information each token. In fact, it’s worse than that, as adding tokens can _reduce_ information contained in text, think inserting random stuff into a string representing dna. So to estimate how much ctx you need, think how much compressed information is in your codebase. That includes stuff like decisions (which LLMs are incapable of making), domain knowledge, or even stuff like why does double click have 33ms debounce and not 3ms or 100ms in your codebase which nobody ever wrote down. So take your codebase, compress it as a zip at normal compression level, and then think how large the output problem space is, shrink it down quadratically, and you have a good estimate of how much ctx you need for LLMs to solve the hardest problems in your codebase at any given point during token generation :::

*emphasis added by me